Task Descriptions

Challenge 1: Target Detection and Classification (Mandatory)

-

Objective: Detect and classify persons (including individuals on deck, on the shore, and a floating mannequin or dummy in the water), vessels, and vehicles in image sequences.

- Provide bounding boxes and class labels of detected objects in the given format.

-

Sensors: Image sequences from multiple sensor types are provided:

- Ground Sensors: Visible (RGB), Thermal, UV, and SWIR.

- UAV Sensors: Visible (RGB) and Thermal.

-

Training data with ground truth bounding boxes and class labels is provided.

-

Test data is provided; results should be submitted for all test sequences (if possible, for comprehensive evaluation), or at least two test sequences from different sensor types. For example, one sequence could be from RGB ground sensor in scenario “bg2”, and another could be from the thermal UAV in scenario “cy3”.

| Class | Example | Example |

| Person

(on deck) |

|

|

| Person

(on the shore) |

|

|

| Person

(in the water) |

|

|

| Vessel |  |

|

| Vehicle |  |

|

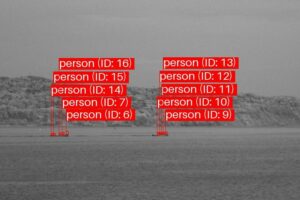

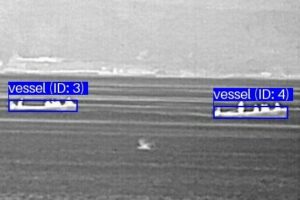

Challenge 2: Long-Term Target Tracking (Optional)

-

Objective: Track persons, vessels, and vehicles continuously over image sequences.

- Objects should be detected and classified as persons, vessels, or vehicles.

- Each detected object should be assigned a unique identifier (or track ID) that remains consistent throughout the sequence. The ID should be provided along with the corresponding bounding box and class label in the given format.

- If an object disappears temporarily (due to occlusion or movement), it should be associated with the same ID when it reappears.

-

Sensors: Image sequences are provided from multiple sensor types:

- Ground Sensors: Visible (RGB), Thermal, UV, and SWIR.

- UAV Sensors: Visible (RGB) and Thermal.

-

Training data includes ground truth bounding boxes, class labels, and track IDs of individual objects.

-

Test data is provided; results should be submitted for all test sequences (if possible, for comprehensive evaluation), or at least two test sequences from different sensor types. For example, one sequence could be from RGB ground sensor in scenario “bg2”, and another could be from the thermal UAV in scenario “cy3”.

Challenge 3: Target Geolocation Approximation (Optional)

-

Objective: Approximate the geolocations (longitudes and latitudes) of a specified object (either a person or vessel) over image sequences from a thermal UAV.

- Throughout each image sequence, ground-truth bounding boxes of objects are provided, for which geolocations should be approximated.

- Provide the geolocations of these bounding boxes in the form of longitude and latitude coordinates, in the given format.

- Telemetry data is provided, including focal length, digital zoom ratio, latitude, longitude, relative altitude, absolute altitude, gimbal yaw, gimbal pitch, gimbal roll, and timestamps corresponding to each image in the image sequence.

- The thermal UAV used for collecting the data is DJI MAVIC 3T. The specifications of this UAV can be found in https://enterprise.dji.com/mavic-3-enterprise/specs.

- (Important) Please note that the UAV’s absolute altitude could be inaccurate since it primarily relies on barometric pressure sensors, which are susceptible to fluctuations in air pressure due to changing weather conditions. Therefore, using absolute altitude may require calibration.

-

Sensor: Only image sequences from the thermal UAV sensor are provided.

-

Training data includes ground-truth bounding boxes of objects, for which geolocations should be approximated, along with their ground-truth longitude and latitude coordinates.

-

Test data is provided with ground-truth bounding boxes of objects, for which geolocations should be approximated. Results should be submitted for all test sequences (if possible, for comprehensive evaluation), or at least two test sequences.

Submission Guidelines

- Submissions can be made based on any of the provided test sequences. However, please submit results for test sequences from at least two sensors.

- The submission to the challenge consists of the generated XML files and a short description (less than 500 words) of the methods used.

- Please create a submission folder structured as follows:

submission_ch[challenge_number]_[submission_name]/

├── [scenario]/

│ ├── [sensor_type]/

│ │ └── predictions.xml

- For example, the structure of the submission folder for Challenge 1, submitted by “JohnDoe”, is shown below. It includes prediction files for test image sequences from “GS_Therm” and “UAV_Therm” across the “bg2,” “bg6,” “bg8,” and “cy3” scenarios.

submission_ch1_JohnDoe/

├── bg2/

│ ├── GS_Therm/

│ │ └── predictions.xml

│ ├── UAV_Therm/

│ │ └── predictions.xml

├── bg6/

│ ├── GS_Therm/

│ │ └── predictions.xml

├── bg8/

│ ├── GS_Therm/

│ │ └── predictions.xml

│ ├── UAV_Therm/

│ │ └── predictions.xml

├── cy3/

│ ├── UAV_Therm/

│ │ └── predictions.xml

- Please generate a ZIP file that includes the submission folder and a text file containing a short (<500 word) description of the methodology used. Then, email it to submissions@pets2025.net, providing full author and affiliation details.

XML schema

Challenges 1 and 2

- XML schema file:

ch1ch2-schema.xsd - XML output example file:

ch1ch2-predictions.xml

Challenge 3

- XML schema file:

ch3-schema.xsd - XML output example file:

ch3-predictions.xml - Please note that the “coordinates” attribute in the XML files follows the format [longitude, latitude].

Evaluation

- The submitted XML results will be evaluated by the PETS2025 Organising Committee using an appropriate selection of benchmark metrics.

- All submissions will be included in a challenge summary paper, which will be published at AVSS 2025, with all challenge participants listed as co-authors.