EUMETNET, is a grouping of 31 European National Meteorological Services which is provides a framework for collaboration between its members in the meteorological and hydrological fields. You can find out…Read More >

Conferences

ISDA2019 in Japan

by Dr Natalie Douglas, University of Surrey and Dr Alison Fowler, University of Reading and NCEO ISDA2019, the 7th International Symposium for Data Assimilation 2019, was hosted in Kobe, Japan…Read More >

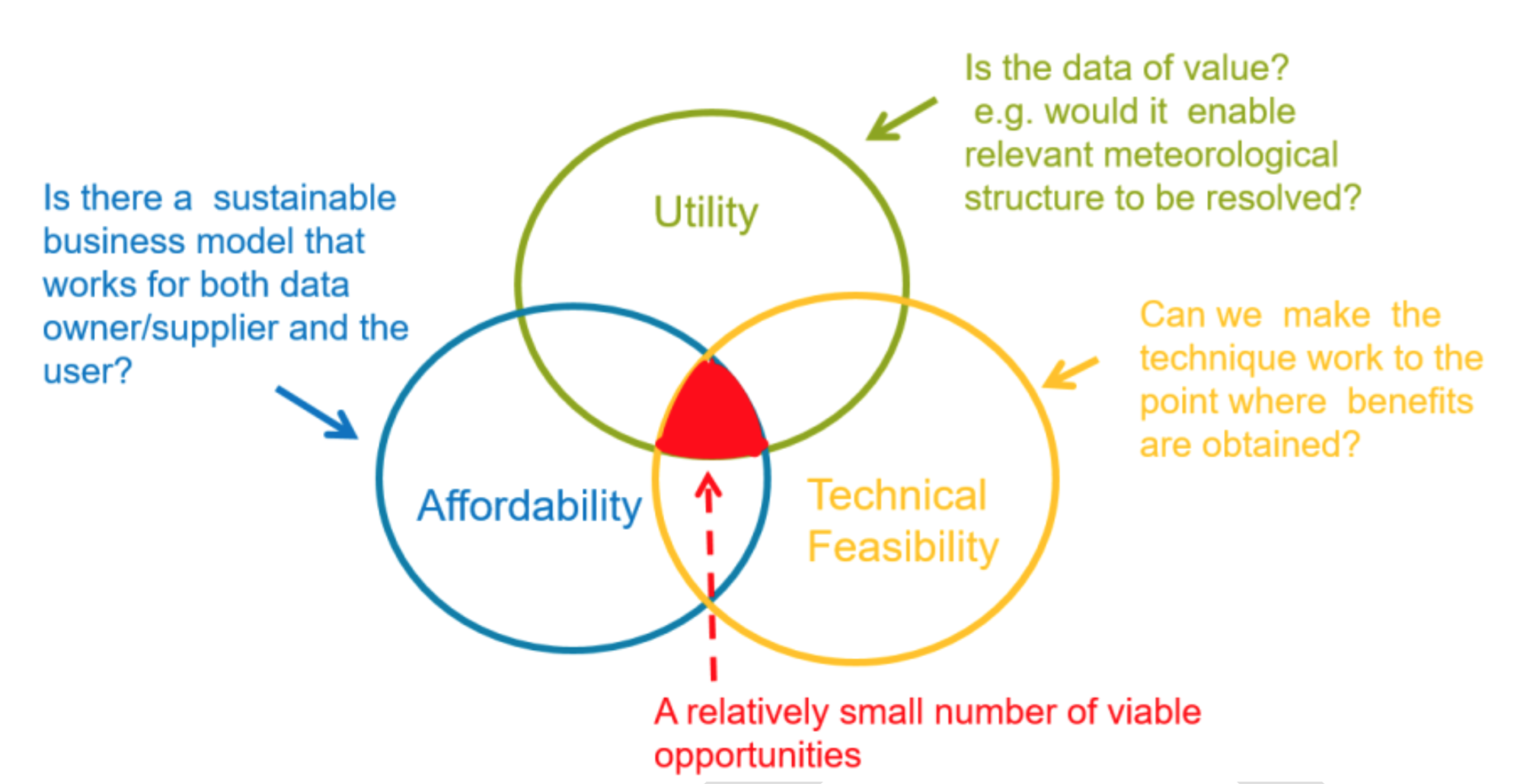

wCROWN: Workshop on Crowdsourced data in Numerical Weather Prediction

by Sarah Dance On 4-5 December 2018, the Danish Meteorological Institute (DMI) is hosted a workshop on crowdsourced data in numerical weather prediction (NWP), attended by Joanne Waller and Sarah…Read More >