ECMWF have just published a strategy for machine learning for the next 10 years. The idea is to combine the best that data-driven approaches can provide with the…Read More >

High impact weather

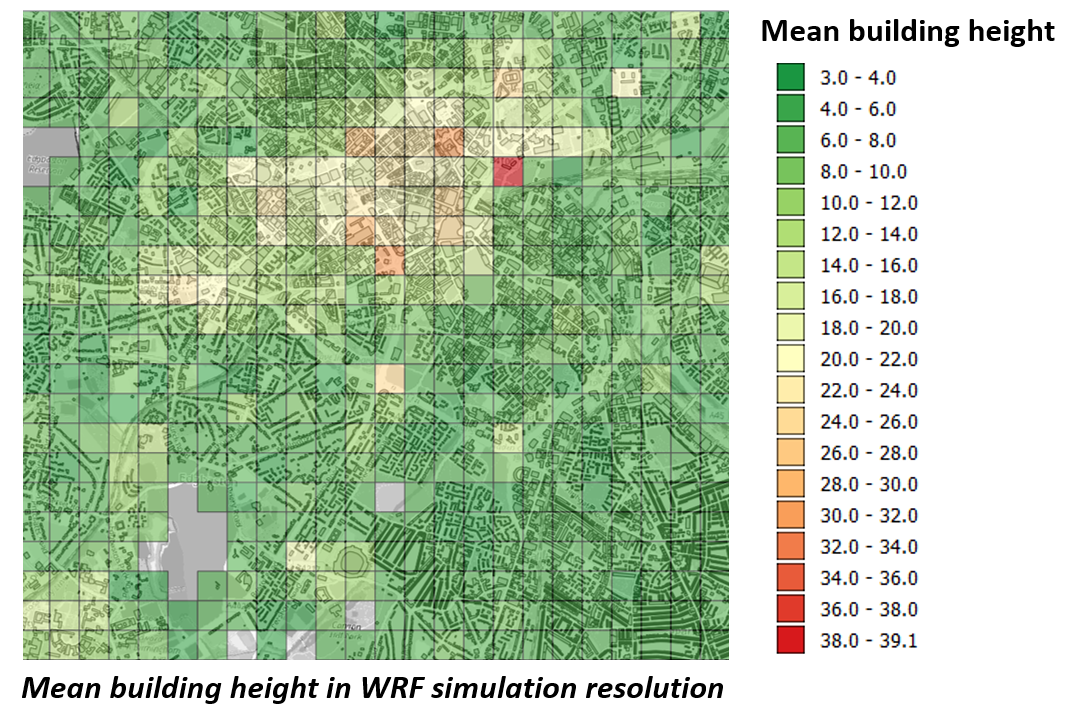

Data-assimilation of crowdsourced weather stations for urban heat and water studies in Birmingham as a testbed

DARE pilot project with participants: Professor Lee Chapman, University of Birmingham; Sytse Koopmans; Gert-Jan Steeneveld, Wageningen University & Research (WUR) The research will be a verification by ground truth observations…Read More >

Investigation of the ability of the renewed UK operational weather radar network to provide accurate real-time rainfall estimates for improved flood warnings.

by Dr RobThompson and Prof Anthony Illingworth, Dept of Meteorology, University of Reading The UK operational radar network has the potential to deliver real-time rainfall estimates every five minutes with…Read More >