by Sukun Cheng, March 2026

The core problem

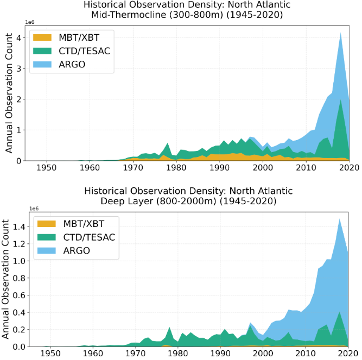

To understand historical ocean changes, researchers produce reanalyses that reconstruct past states by combining numerical models with historical observations. Over the last century, observation quality and quantity have shifted drastically. The sparse, shallow ship-deployed instruments of the early 1900s were eventually supplemented by eXpendable BathyThermograph (XBTs) in the 1970s, which finally penetrated the thermocline. A definitive step-change occurred around 2004 with the Argo programme, providing near-global, deep-ocean profiling. This density shift creates a fundamental consistency problem: distinguishing genuine climate signals from mere changes in our measurement capabilities (see figure 1).

In modern ocean reanalyses, data assimilation (DA) regularly updates the model via increments to pull the state toward observations. During long data-sparse periods, the model drifts significantly; when rare observations are finally assimilated, they trigger spurious increment pulses.

Methodological approach

Traditional post-processing algorithms struggle with this temporal discontinuity. A time-dependent smoother developed by Dong et al. (2021) corrects current increments by applying an exponential decay to future increments. However, its reliance on a rigid, uniform damping parameter fails to adapt to local observation density or the highly variable uncertainty between sparse and data-rich eras.

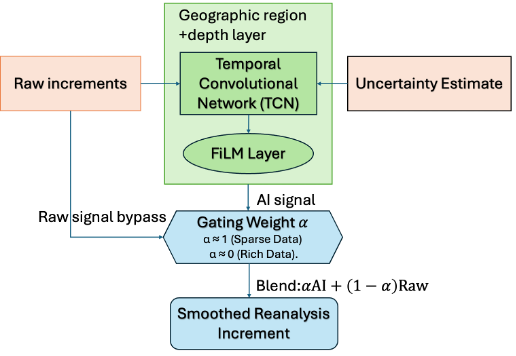

We are investigating an AI smoother to override this rigid parameterization, drawing direct inspiration from video generation technologies that interpolate continuous motion from sparse, isolated frames. Following the core philosophy of Dong et al. (2021)—learning from future increment “experience” to revise the target time increment, given that the spatial error covariance has already been estimated by the DA system—a Temporal Convolutional Network (TCN) is trained to learn the temporal structure of ocean increments. This allows the network to redistribute erratic corrections physically, demonstrating strong adaptive capability. A window of raw increments and local ensemble spread (representing uncertainty) is fed into the TCN. Because the near-surface behaves dynamically differently from the deep thermocline, a Feature-wise Linear Modulation (FiLM) layer adapts the network’s behavior based on depth. Figure 2 provides a flowchart of AI smoothing process.

Crucially, the smoother learns a gating weight (between 0 and 1) that blends the AI-smoothed signal with the original raw increment. During data-sparse eras, the gate opens, trusting the AI to redistribute corrections. During data-rich eras, it dynamically closes to stay conservative and focus on smoothing the high-frequency noise inherent in the raw increments. An explicit physical conservation constraint guarantees that the total heat content is strictly preserved across multi-year integration periods.

Current findings

Results from the mid-thermocline (300–800 m) and the deep ocean (800–2000 m) definitively prove the AI fundamentally outperforms the Dong baseline. The AI smoother reduces high-frequency noise by an order of magnitude while improving low-frequency signal retention, driving the correlation of multi-year variability from 0.88 to 0.99. Spurious pulses in the pre-Argo period are largely eliminated.

This remains an active research problem. The near-surface (0–20 m) and deep abyss (below 2000 m) present distinct challenges. Surface increments lack temporal structure and resemble white noise, forcing us to drastically narrow the network’s temporal receptive field to prevent overfitting. Conversely, the abyss suffers from extremely slow dynamics and near-zero observations. To isolate these physical signals from noise, we plan to leverage the CIGAR CS project’s 1-degree resolution models, using a 40-member ensemble to explicitly map uncertainty—a critical advantage over deterministic high-resolution models.

Broader impact

A temporally consistent reanalysis is essential for oceanographers studying critical climate change indicators, ensuring observed shifts are physical rather than observational artefacts. Operational services stand to gain immediate product quality improvements. More broadly, this demonstrates how machine learning can function as an intelligent, physics-constrained post-processor embedded directly within the DA workflow, building upon traditional knowledge rather than blindly replacing it.

References

Dong et al. 2021: Improved high resolution ocean reanalyses using a simple smoother algorithm. JAMES. https://doi.org/10.1029/2021MS002626